Skip to content Unlock all 500 years of our collections for free

Start free trial

Start your family history journey Discover where your past will take you

What would you like to do?

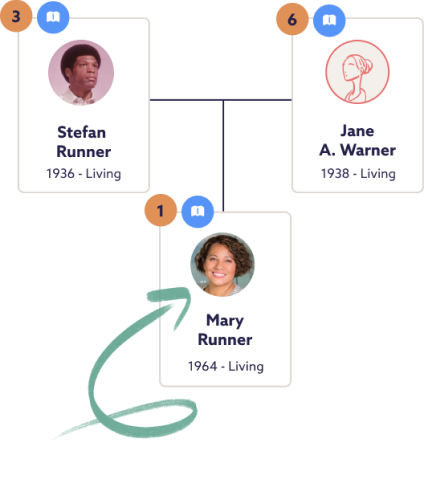

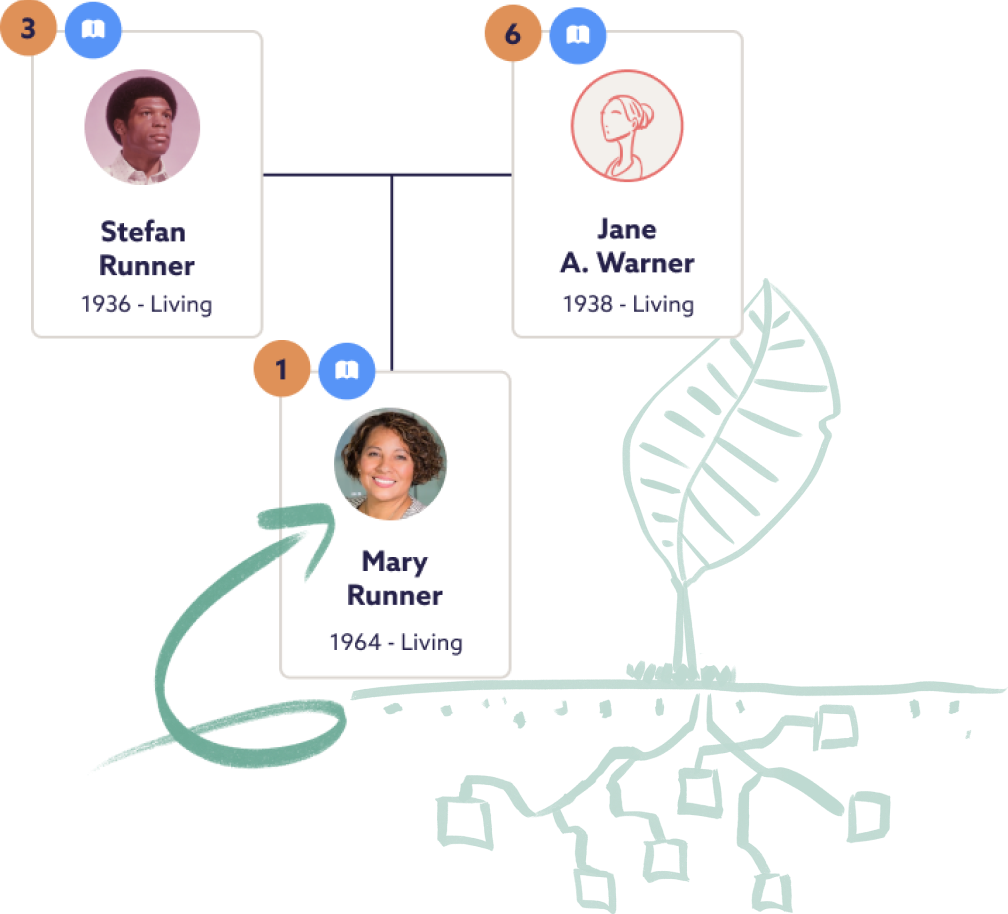

Get started with our family tree builder 1

Add the family members you know, and we’ll start our detective work. We’ll send you a hint when we find something interesting.

2

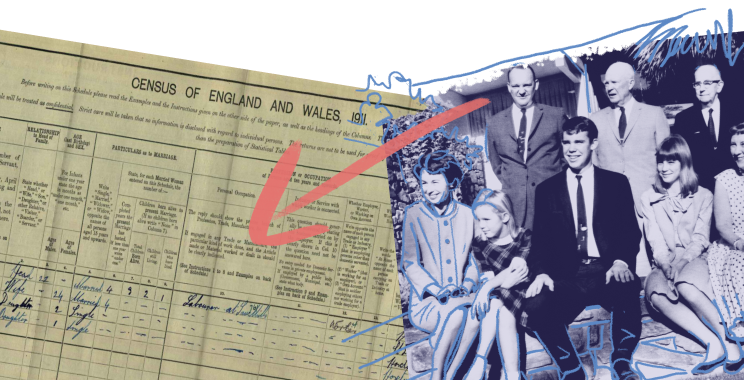

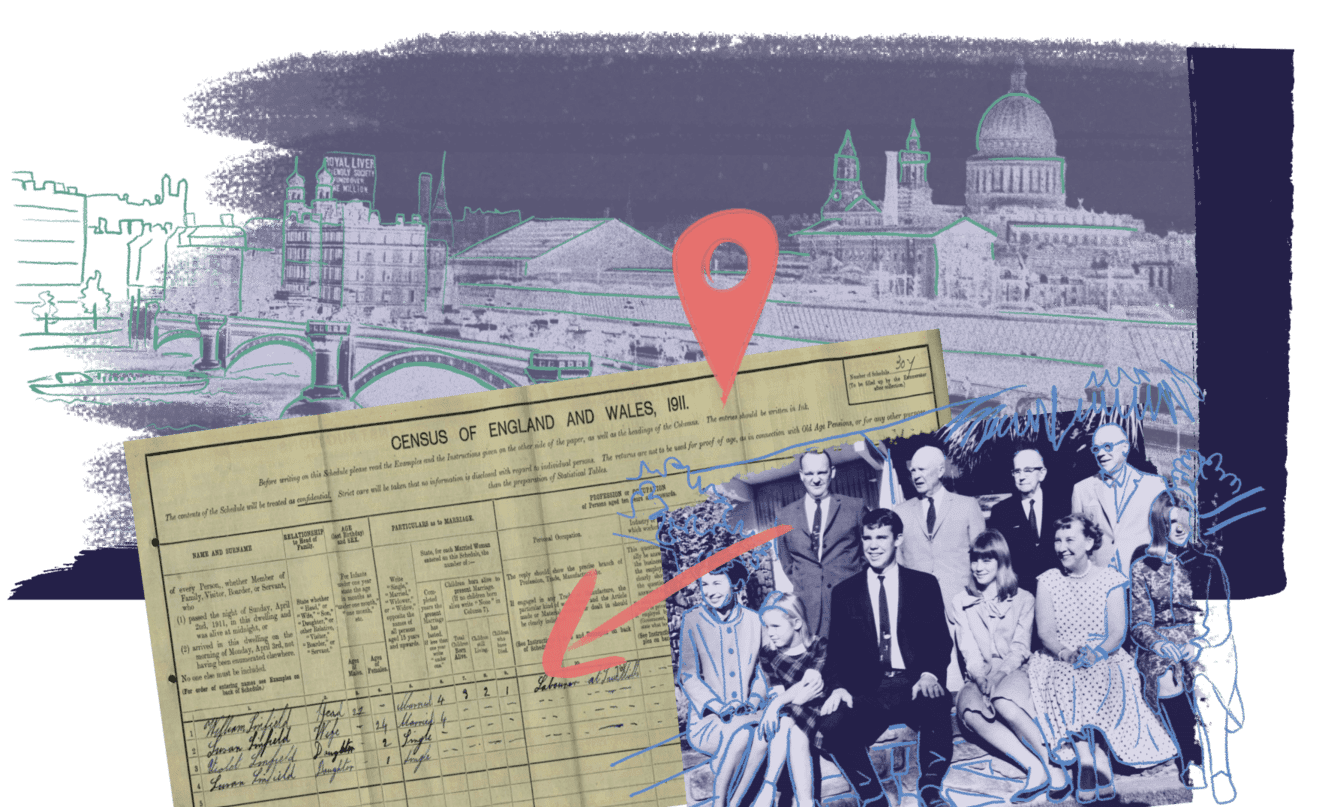

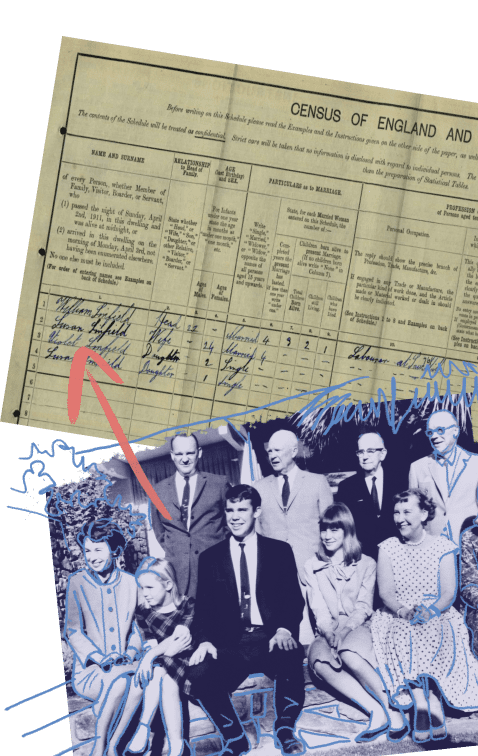

Track down relatives in over 10 billion genealogy records, including the 1921 Census, and keep growing your family tree.

3

Discover shared connections in over 4.5 million family trees built by Findmypast members.

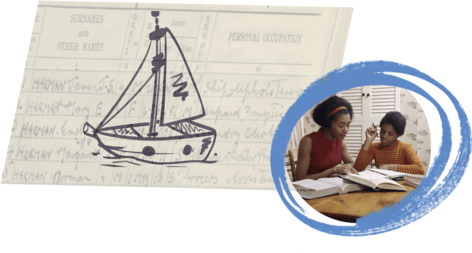

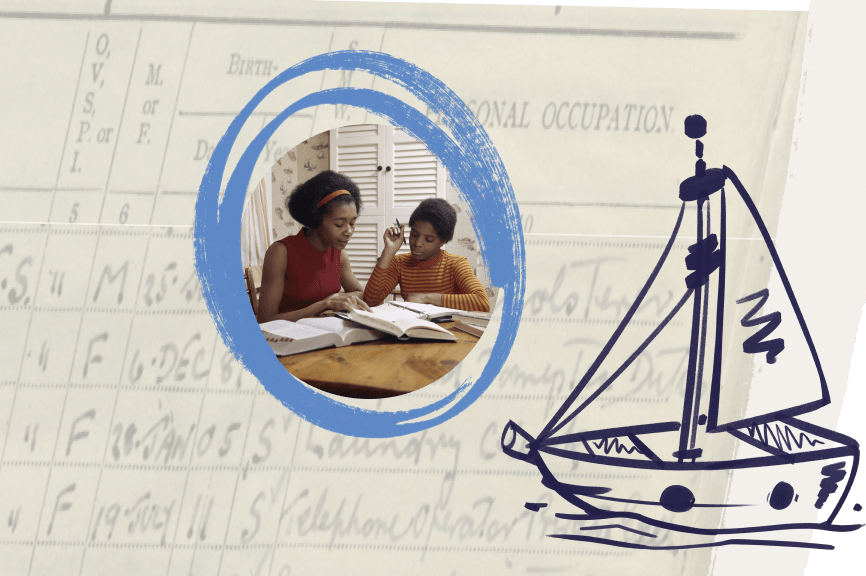

Wendy's Story The 1921 Census - Community Discoveries After searching for many years and finding dead ends, I finally found my grandfather in the 1921 Census on Findmypast!

Wendy found their family members in the 1921 Census using the Findmypast family tree builder and the handy hints we found for them using our exclusive census records.

What family stories might you discover?

We’re here to help you on your genealogy journey Our friendly Dundee-based customer support team are here to answer any questions you might have, every step of the way.

Join our community of over 100,000 researchers Share your journey with the Findmypast genealogist community on Facebook, or learn research skills at your own pace on our YouTube channel and our helpful genealogy blog.

Make the most of all our amazing partnerships

The National Archives is home to over 1,000 years of precious British history. Together, we’re preserving that history forever by making it accessible online.

Back in 2011, we set out to bring the British Library’s entire newspaper archive online. Millions of digitised pages later, our journey with the UK’s national library continues.

Thanks to our long-standing partnership with the Family History Federation, we’re home to millions of records you won’t find anywhere else online.

Search for a relative in over 4 million family trees Trace your family back in time and see what other Findmypast family historians in our genealogy community have discovered about your ancestors.

Do you have a family tree somewhere else? Don't worry, you don't have to start again - you can import your family tree as a GEDCOM file from another genealogy website.

Import your GEDCOM Try us free for 7 days

Get started with essential family history records